Mimmo Cangiano Belcuore

I’m an architect and technology builder working across biology, software, and intelligent systems. I build integrated products that combine hardware, data, and AI, focusing on how systems behave in the real world, not just in isolation.

The Reality Stack is where I explore these ideas. Writing is a tool to think: to break down complex systems, move across layers, and understand how physical, digital, human, and economic forces interact.

Most problems are treated within a single domain. I’m interested in what happens when you design across them.

Business Stories

-

I’m building a new class of medical system that combines sensors, embedded software, and AI to rethink how health data is captured and used. The mandate is simple on paper: move from episodic, fragmented measurements to continuous, real-world insight. I hold the thread across system architecture, product definition, and experience—connecting hardware, data pipelines, and clinical intent into a single coherent system.

Most healthcare innovation treats the problem as one of data aggregation. More sources, better dashboards, cleaner summaries. But the real constraint sits upstream. If the signal is wrong, no model fixes it.

Clinical-grade data is not just about accuracy—it’s about context fidelity. A heart rate reading detached from posture, motion, or environment is ambiguous. Yet most systems optimize for collection volume, not interpretability. This creates a quiet failure mode: high-confidence outputs built on low-fidelity inputs.

The system inverts that logic. Instead of starting with AI models, it starts with signal provenance—how data is generated, under what conditions, and what assumptions travel with it. This pushes decisions down into the physical layer: sensor selection, placement, calibration cycles, power constraints. Hardware is not a container for software; it defines the boundary conditions of truth.

But solving the physical layer alone doesn’t create a product. Healthcare operates under fragmented incentives—patients, providers, regulators, payers—each optimizing for a different outcome. A continuous sensing system introduces temporal asymmetry: patients live in real time, but care systems react in discrete events. Bridging that gap requires designing not just data flows, but decision surfaces—where raw signals become actionable evidence.

This is where most “AI in healthcare” efforts collapse. They produce predictions without ownership. Who acts? Under what liability? With what threshold of certainty?

I frame the platform as an evidence engine, not a prediction engine. The goal is not to say “what might happen,” but to surface “what is happening now, with enough confidence to act.” That shift cascades through the stack: model design, UX, alerting logic, even how data is stored and audited.

At the system level, this creates a different kind of product. Not an app, not a device, but a closed-loop system where sensing, interpretation, and response are tightly coupled. Distribution is not just shipping hardware—it’s embedding into care pathways that were never designed for continuous input.

The risk is obvious: complexity across every layer. The upside is asymmetry. Once the system controls both data generation and interpretation, it becomes difficult to replicate with point solutions.

Stack takeaway

-

Owning the physical layer of data generation creates leverage; models without signal control remain downstream commodities

-

Healthcare value emerges at the decision boundary, not the prediction layer—evidence beats inference when action is required

-

-

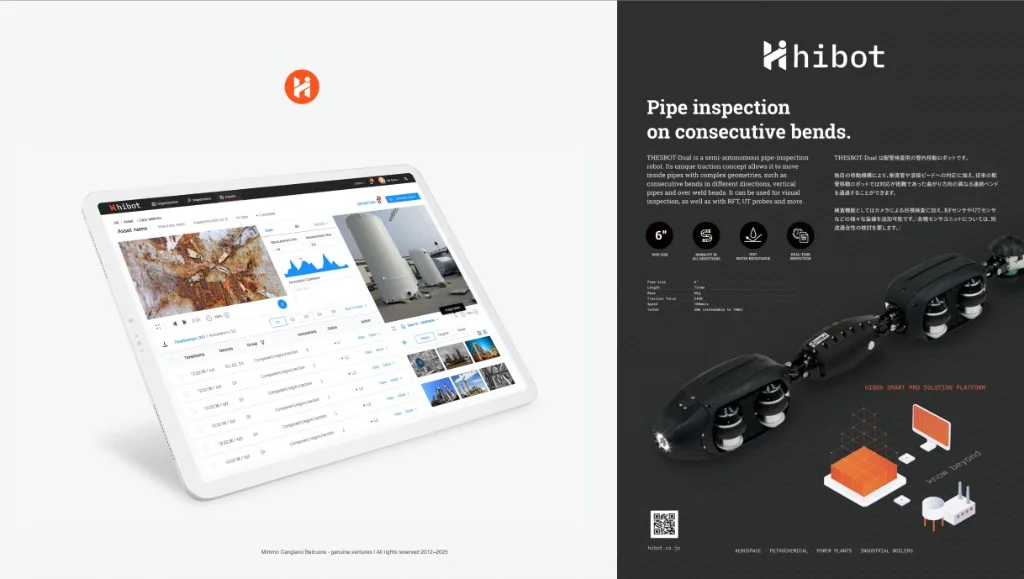

I spent one years inside a venture-backed robotics company building snake-like, sensor-rich robots for infrastructure inspection. The mandate was to move from an R&D product company to a Robotics-as-a-Service model that operators could buy, run, and trust. I held the thread from positioning through platform and experience design, keeping hardware behavior, service operations, and customer expectations aligned.

The robots could access places people could not. The harder question was commercial: what was the customer actually purchasing? A machine, or reliable evidence about asset condition when risk and liability are high?

Most inspection programs fail on consistency, not camera availability. Runs vary, evidence is hard to compare over time, and interpretation risk remains with the asset owner. We reframed the service around repeatable evidence, clear data lineage, and operational handoffs that matched how maintenance decisions are really made.

What shipped was a more honest service contract between field capture, interpretation, and operations. The durable product was not robot novelty. It was traceable, decision-ready evidence that could support recurring maintenance workflows.

Stack takeaway

- RaaS value comes from accountable evidence and liability clarity before it comes from robotics performance.

- Service boundaries and data boundaries must align; when contracts and operational ownership diverge, the model breaks even with strong hardware.

-

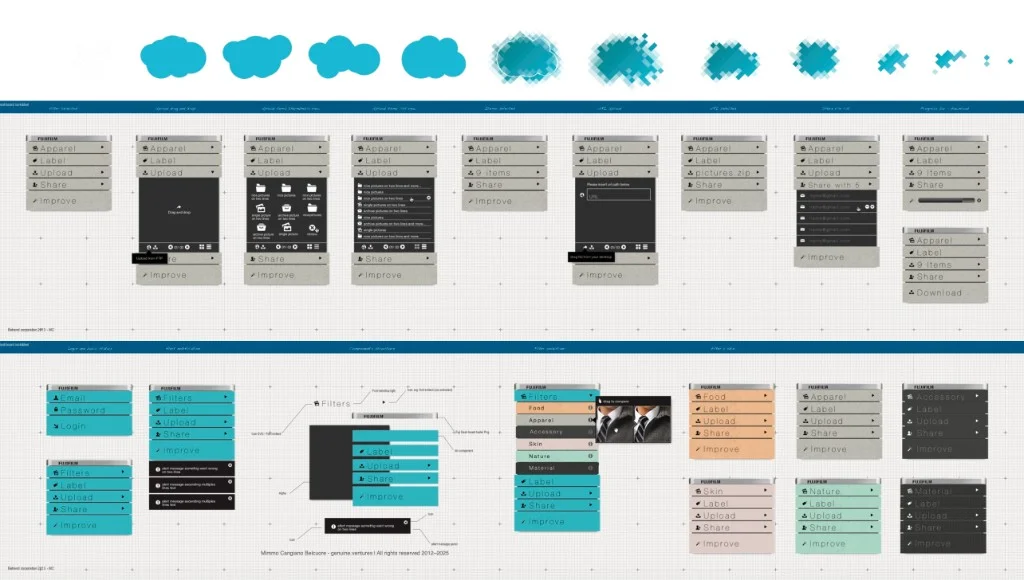

This project sat inside a global camera manufacturer with deep image processing know-how still locked inside devices. The mandate was to expose that capability as a B2B web service for bulk image workflows, without diluting technical credibility. I held the thread from service framing through UX, CX, and brand so what the platform promised matched what the pipeline could reliably deliver at volume.

The core problem was not algorithm quality. It was distribution. Commercial image operations now run in browsers, DAM systems, and batch pipelines, while the strongest IP often still lives inside hardware boundaries.

Operators did not need prettier demos. They needed predictable output under load: thousands of files, uneven inputs, strict brand requirements, and clear failure behavior. We designed around that reality by defining service behavior across ingest, processing, delivery, and support, then translating those choices into an interface that communicated confidence rather than novelty.

What shipped was a cloud-native B2B service for bulk enhancement and image management that brought in-house imaging IP into SMEs workflows without requiring customers to adopt hardware-centric processes.

Stack takeaway

- Hardware-era IP becomes market leverage only when it is packaged as a service unit buyers can operate and trust at scale.

- In bulk imaging, operational semantics matter as much as core algorithms; latency, error handling, and traceability are part of the product.

-

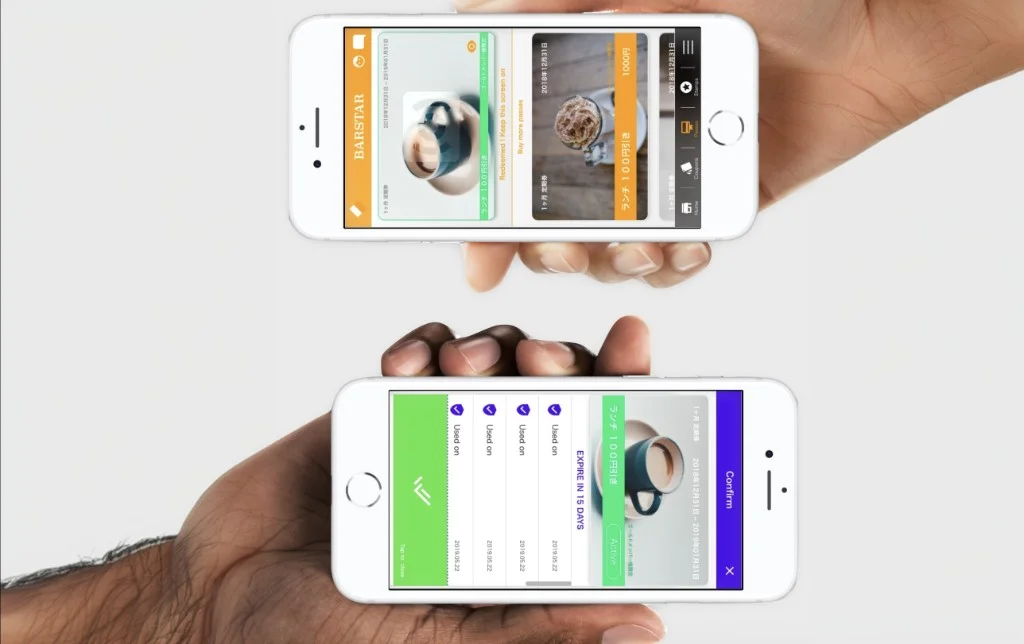

The work sat inside a Japanese marketing-technology company that had done mobile messaging long before smartphones made those channels mainstream. The mandate was clear: help local shops keep a direct relationship with customers across store visits, SMS, and apps, without handing that relationship to larger platforms. I led the product-and-service thread from system mapping to journey design, so managers, customers, and admin teams would not end up with three disconnected products.

Most retail teams think the hard part is campaign creativity. For local merchants, it is usually continuity. Can they keep customer preferences, consent, and visit history coherent when staff changes and time is scarce? For customers, the question is trust: why share data, and what do I get back beyond generic offers?

We made one structural choice early: one shared service core for data and logic, with channels acting as thin surfaces. That only works if journeys are defined before features multiply. I mapped who needs to do what, in which order, on which device, then set reusable UX patterns so each release extended the system instead of fragmenting it.

What shipped was a three-surface platform with one backbone: one application for shop managers, one for end customers, and one for administration, all tied to the same records and rules. We also shipped a progressive web route so adoption did not depend only on app stores. The practical result was simple: clearer permissions, consistent history, and cleaner handoffs across roles.

Stack takeaway

- Omni-channel is an ownership problem: if the shop does not own one trusted customer record across physical and digital touchpoints, each new channel becomes a new silo.

- Multi-sided products scale core-system mistakes fast: unclear flows in the backbone become support cost and trust loss, no matter how polished the interface is.

-

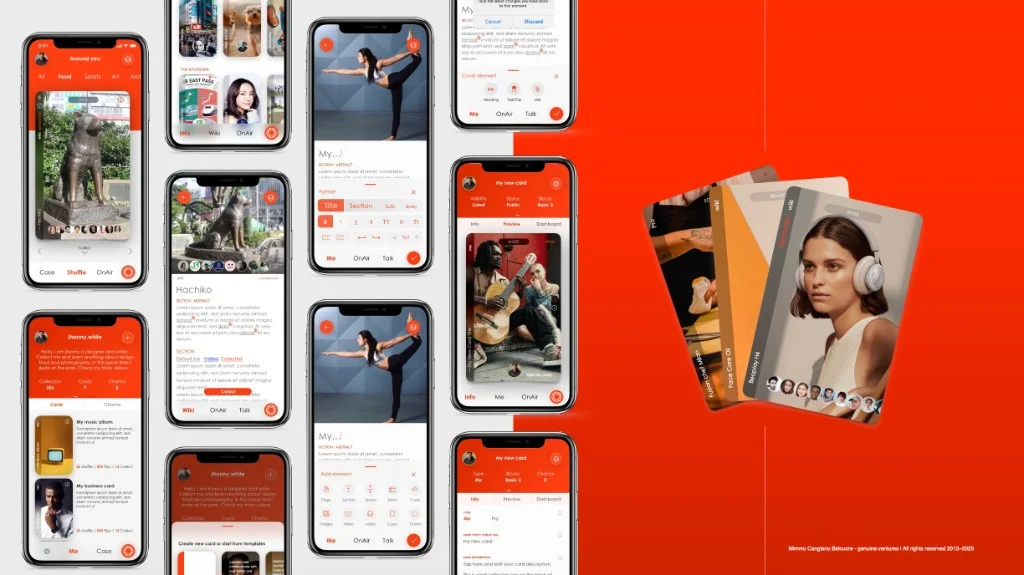

I worked with an early-stage venture exploring a difficult question: can we design exchange systems for real local value, not just optimize another listing-and-checkout flow? The mandate was to turn that question into an operating product model. I held the thread across market analysis, business strategy, brand, and product design so the venture story and the product rules described the same economy.

The central tension was visibility. Formal metrics capture only part of how value is created in communities. Informal services, care work, and small peer exchanges often sit outside the models institutions optimize for. If a marketplace ignores that, it becomes another catalog. If it models it, the product must define what counts as value and how trust is established.

We designed a poly-marketplace approach: one core system that could support multiple exchange domains without forcing each community into the same transaction script. That required continuity of identity, consent, and reputation across different surfaces, while leaving room for domain-specific participation rules.

This framing helped align early stakeholders and secure initial funding because investors, builders, and early users could point to the same object: not just an app interface, but a working model of participation.

Stack takeaway

- Marketplace failure usually starts in value definition: if the platform cannot clearly model what is being exchanged, interface quality cannot rescue trust.

- Venture narrative and product semantics are one system in early stage markets; if they diverge, liquidity and alignment break before scale begins.

-

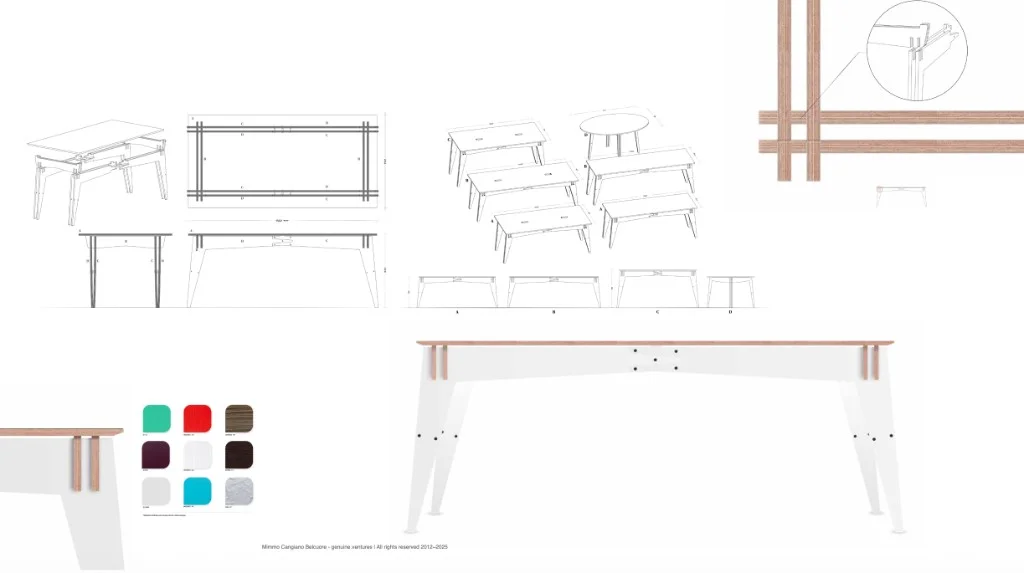

The brief was practical: furnish a new three-story apartment in London while cutting material and energy waste across the full lifecycle, not just in the showroom story. That pushed the work from style toward geometry, sheet yield, transport, and assembly logic. I held the thread from product design through brand and experience, then into prototyping and CNC constraints so the result could be produced repeatedly without relying on artisanal exceptions.

In furniture, “sustainable” is often reduced to material labels. The bigger lever is logistics design. Do you ship bulky finished goods across borders, or do you ship files and produce near where the object is needed? I designed a family of laminated birch plywood tables around nested cutting logic, treating each sheet as a hard budget rather than a soft suggestion.

Distributed manufacturing is not automatically better. It depends on local machine capability, process discipline, and quality control at handoff points. The product had to encode those constraints in the digital definition itself, so the same files could produce reliable outcomes across different CNC environments.

What shipped was a repeatable model: CNC-native product architecture, on-demand local production, and a brand narrative that explained why the object looked and behaved the way it did.

Stack takeaway

- Local production only scales when the digital product definition includes manufacturing constraints from day one, not as a late-stage handoff.

- Environmental performance follows logistics architecture: if you do not change what moves through transport, you mostly change messaging, not footprint.

-

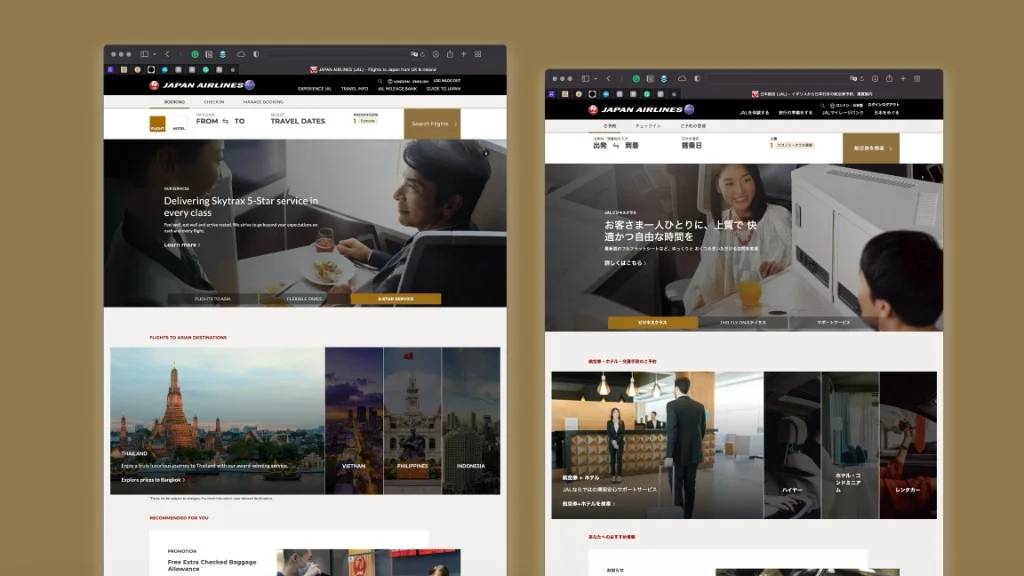

The project sat inside a global full-service airline where routes cross languages, cultures, and booking habits every day. Leadership wanted data-driven personalization to improve relevance without turning the web experience into a patchwork of conflicting messages. I helped define the method across teams: how segmentation should work, how journeys should be designed, and how UI and content should stay coherent from first touch to conversion. We started with a narrow but meaningful corridor: business travelers from English-speaking markets flying into Asia.

Personalization usually fails at handoffs. Data teams define segments, brand teams define voice, web teams ship components, and experiment teams optimize metrics. The traveler still gets friction at the seams. The real job was not producing more segments. It was creating one model teams could implement without reinterpreting the logic each sprint.

We introduced web patterns that adapted offers and content to segment and journey stage instead of defaulting to one global average. In parallel, the marketing organization gained a repeatable operating model to design and run those programs. In targeted business-travel slices, conversion rose by roughly fifteen percent once segmentation logic, content, and routing were aligned.

Stack takeaway

- CDPs create value only when teams share one eligibility and workflow model; otherwise personalization is louder messaging, not better decisions.

- In business travel, corridor, language, and trip intent constrain relevance more than broad demographics; experience design must encode those constraints or trust and conversion drop.

-

Through a Domus Academy program in Milan, I worked with a Japanese oral-care company that needed more than another line extension. The mandate was to define a credible new category based on how people actually live, then turn that insight into product, packaging, and market logic. I held the thread across target research, category framing, product concepting, prototyping, and go-to-market narrative.

Most oral-care shelves assume use happens at home, in private, around fixed routines. Research showed a different reality: people manage confidence and freshness across transit, work, meals, and social moments. The category framing lagged behind behavior.

That gap led to Oralcosmetic: not vanity positioning, but recognition that oral presentation is part of public life. The product direction, Orastick, focused on portable formats that support quick refresh between fixed routines, with packaging and messaging designed to make the usage moment immediately legible.

The value for the business was not a one-off concept. It was a defensible category wedge grounded in observed behavior, with room to scale because it matched where demand was already moving.

Stack takeaway

- Category boundaries fail when they ignore usage context; once behavior shifts to public moments, product logic and positioning must shift with it.

- Research creates value only when it becomes a usable ritual plus object and promise, not when it stays as a slide deck of insights.

-

Inside a multi-generation shoemaking business, the mandate was direct: make the craft viable for another century without turning it into a museum story. I set out to compress a full vertical flow into a compact Retail Factory: leather in, finished shoes out, in a footprint small enough for malls and city centers. I held the product and engineering thread from foot capture to digital last modeling, additive manufacturing, pattern generation, and local assembly.

Most people treat custom footwear as a leather-and-stitching story. On the floor, the constraint is earlier: the last. Feet vary person to person, and even left to right. If the last is wrong, everything downstream is elegant but uncomfortable.

We built a workflow that scanned feet through instrumented socks, generated a modifiable digital twin of the last, and adapted geometry to style families. From there, we printed lasts around eighty percent lighter than conventional shop tools, cut patterns, and assembled in place with a target cycle under thirty minutes.

The strategic shift was ownership. The last stopped being an invisible factory tool and became part of the delivered product, reused by the customer as a shoetree. That moved value from hidden inventory to a visible object tied to long-term fit and care.

Stack takeaway

- Custom footwear scales when last geometry becomes a first-class digital asset; fit quality is decided there, not at finishing.

- When production is visible and local, tooling economics and customer trust change together; transparency reshapes both margin logic and product meaning.

-

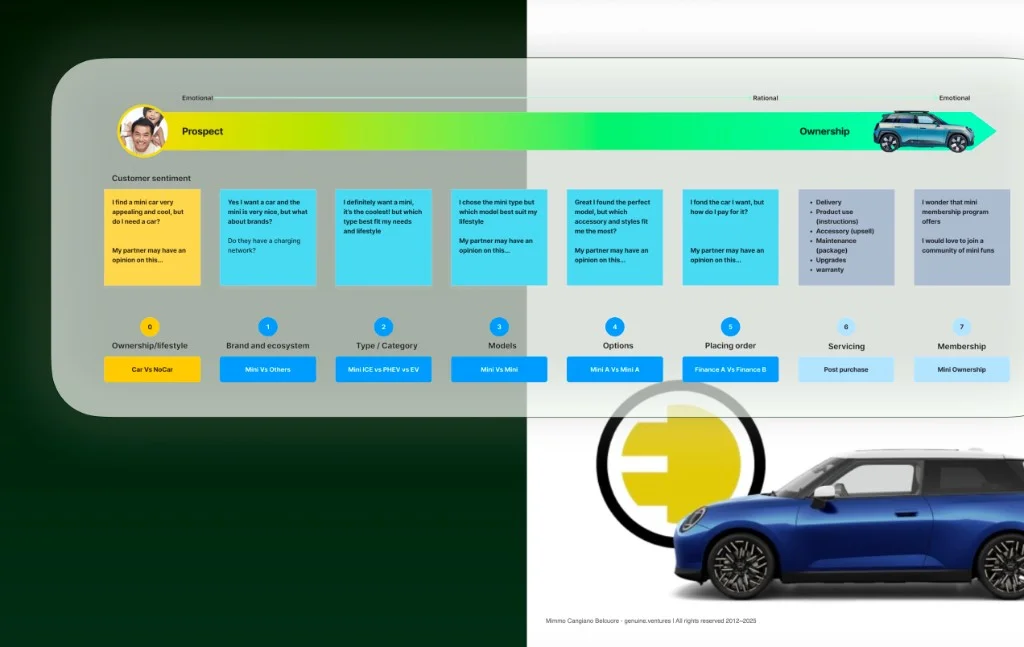

I worked with a heritage automotive brand during the shift to battery-electric vehicles and digital commerce. The mandate was to make pre-order, configuration, purchase, and post-purchase communication feel like one journey instead of disconnected channels. I held the thread from segmentation and journey logic through CRM automation and localized UX, so marketing operations and product experience stayed aligned across markets.

EV buying extends the timeline. Customers move through reservation, allocation, delivery windows, charging concerns, and software updates. In that context, omnichannel is not about adding touchpoints. It is about preserving state: what the customer has already chosen, what was promised, and what needs to happen next.

The recurring risk was tooling that automates campaigns while storefronts, configurators, and local retail constraints still treat the same customer as different records. We aligned segment definitions to real journeys, defined automations around real handoffs between central brand and local channels, and shipped localized UI patterns that clarified differences instead of hiding them.

What changed was operational rather than cosmetic. Teams could run differentiated journeys at scale, keep relationship data cleaner, and support direct digital commerce without breaking legacy distribution paths.

Stack takeaway

- EV commerce is a long decision system: if CRM activates only after delivery, you lose the highest-leverage part of the journey.

- Localization is a system constraint, not a translation task; regulation and channel economics must shape journey logic before automation goes live.